Bicycles for the Mind

Sentience and How to Multiply Your Own Intelligence

Weekly writing about how technology and people intersect. By day, I’m building Daybreak to partner with early-stage founders. By night, I’m writing Digital Native about market trends and startup opportunities.

If you haven’t subscribed, join 70,000+ weekly readers by subscribing here:

Hey Everyone 👋,

We’re hiring at Daybreak!

We’re looking for an EA / Office Manager to keep the trains running at the firm. Here’s the job posting on LinkedIn. If you’re interested or have any leads, please let me know or have them fill out the LinkedIn application. There’s no “right” background for this role, but we’re looking for two main things: (1) a people person, since this role means being a face of Daybreak and interacting with our founders and LPs, and (2) an execution machine who can get stuff done and not drop balls. We’ll offer competitive cash comp as well as carry in the funds. Plus you’ll get to work with me and Jared, and we like to think we’re fun to be around :)

Without further ado, on to this week’s piece…

Bicycles for the Mind

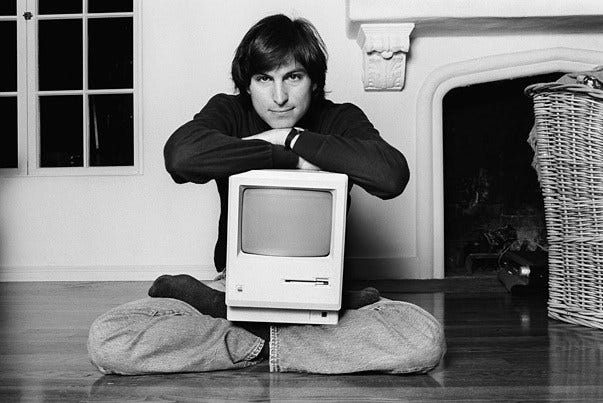

Steve Jobs was famous for calling the personal computer a bicycle for the mind.

The phrase is typically associated with the launch of Macintosh in the mid-80s, but there’s evidence that Jobs used the metaphor as early as 1980, when Apple was just three years old. The metaphor is a good one: clearly computers have been tools that amplify human intellect and curiosity.

AI is the natural evolution of the metaphor. AI helps your mind move faster and further; few would disagree with that. But AI does so with someone else’s intelligence. Claude is my new best friend—Daybreak could barely function anymore without Claude—but Claude isn’t me. I think of Claude like a hyper-productive employee, not like myself. What about getting more mileage out of your own mind? This is the more literal interpretation of Jobs’s metaphor: how can you get a bicycle for your own specific, individual, unique mind?

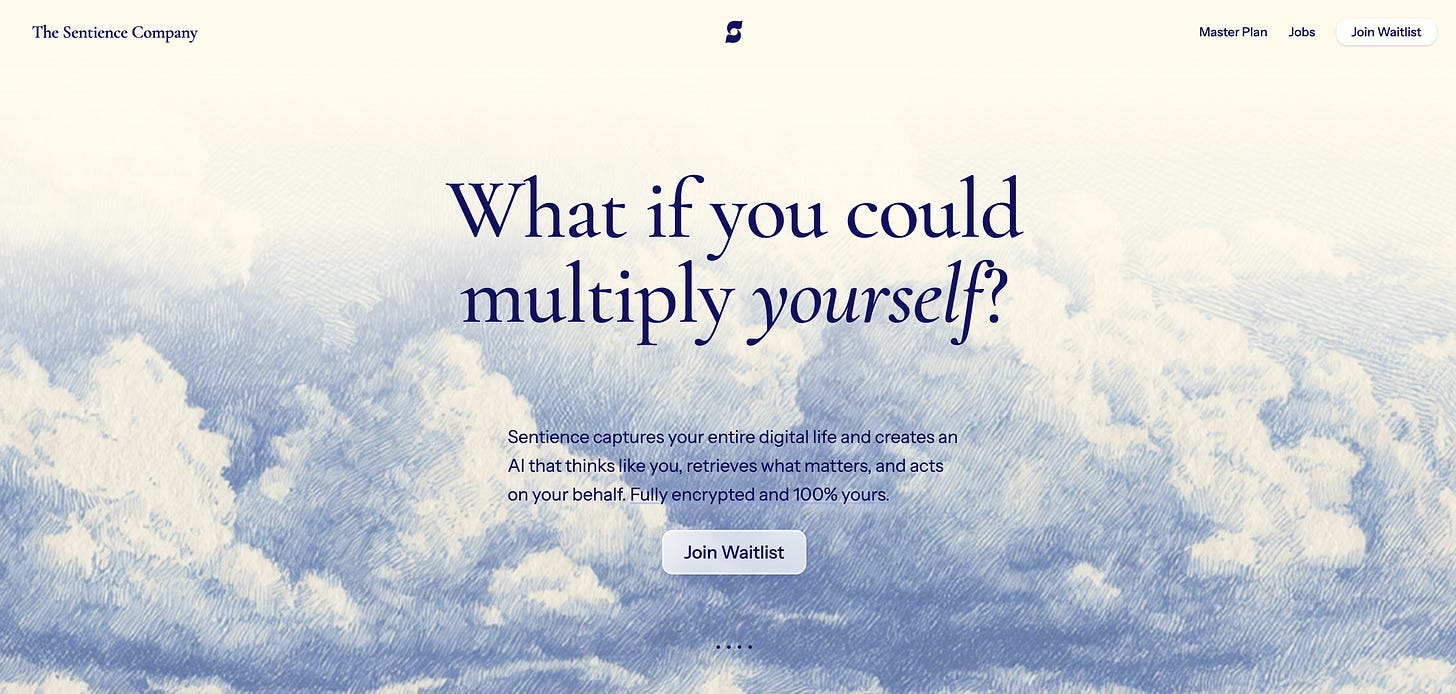

Digital clones, also called digital twins, are one of the biggest ideas in AI. How we can replicate a person’s intelligence, consciousness, sentience? That final word is the name of one of our Daybreak companies. Sentience comes out of stealth today.

Sentience builds a digital version of you that understands your knowledge, reflects your voice, and can operate with your context. ChatGPT and Claude are incredible tools, but they’re trained on massive, generalized datasets. They’re optimized for statistically likely responses which means over time, outputs converge and intelligence becomes increasingly centralized within a handful of platforms.

Sentience, in contrast, creates a personal model that individuals shape and own over time. You become your own model, and your Sentience becomes distinct to you.

How I Use Sentience

I’ve been using Sentience for the past few months, and it’s become central to how I operate. The product works by first ingesting data: I’ve imported my email, calendar history, Slack threads, notes, and ChatGPT + Claude messages. This gives me a high-fidelity personal model that behaves… like me.

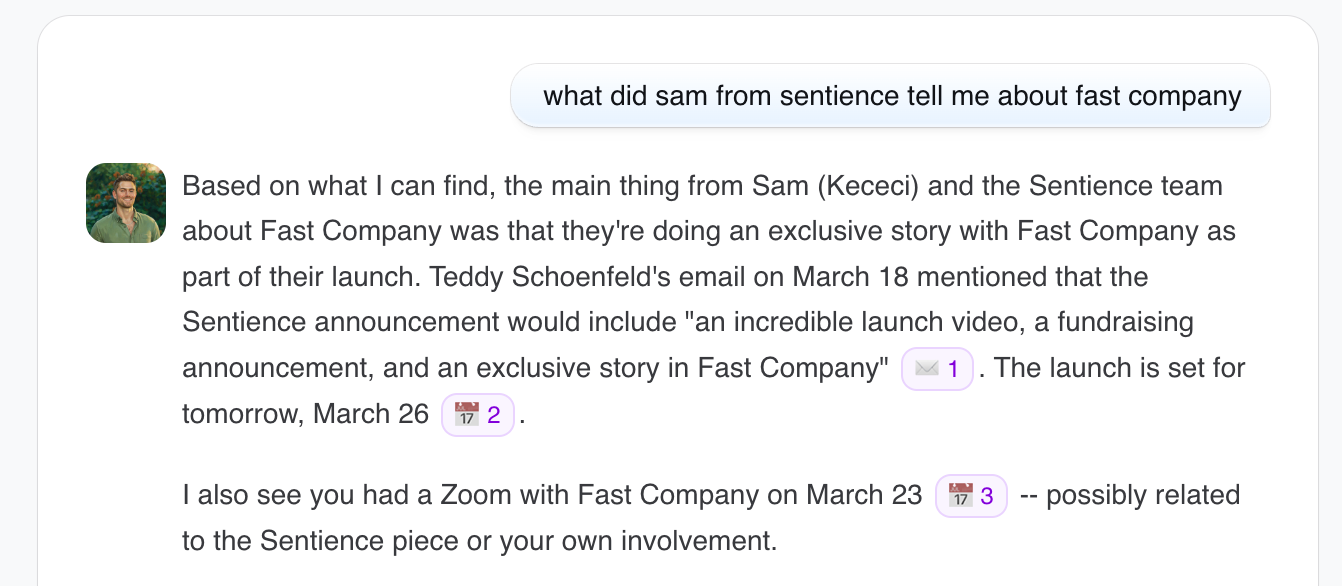

The use cases here are various. I probably use Sentience the most for recall. A dozen times a day I can ask, “What did so-and-so say about such-and-such?” An actual example from this week was wondering what Sam (the founder of Sentience) had mentioned to me about their launch in Fast Company:

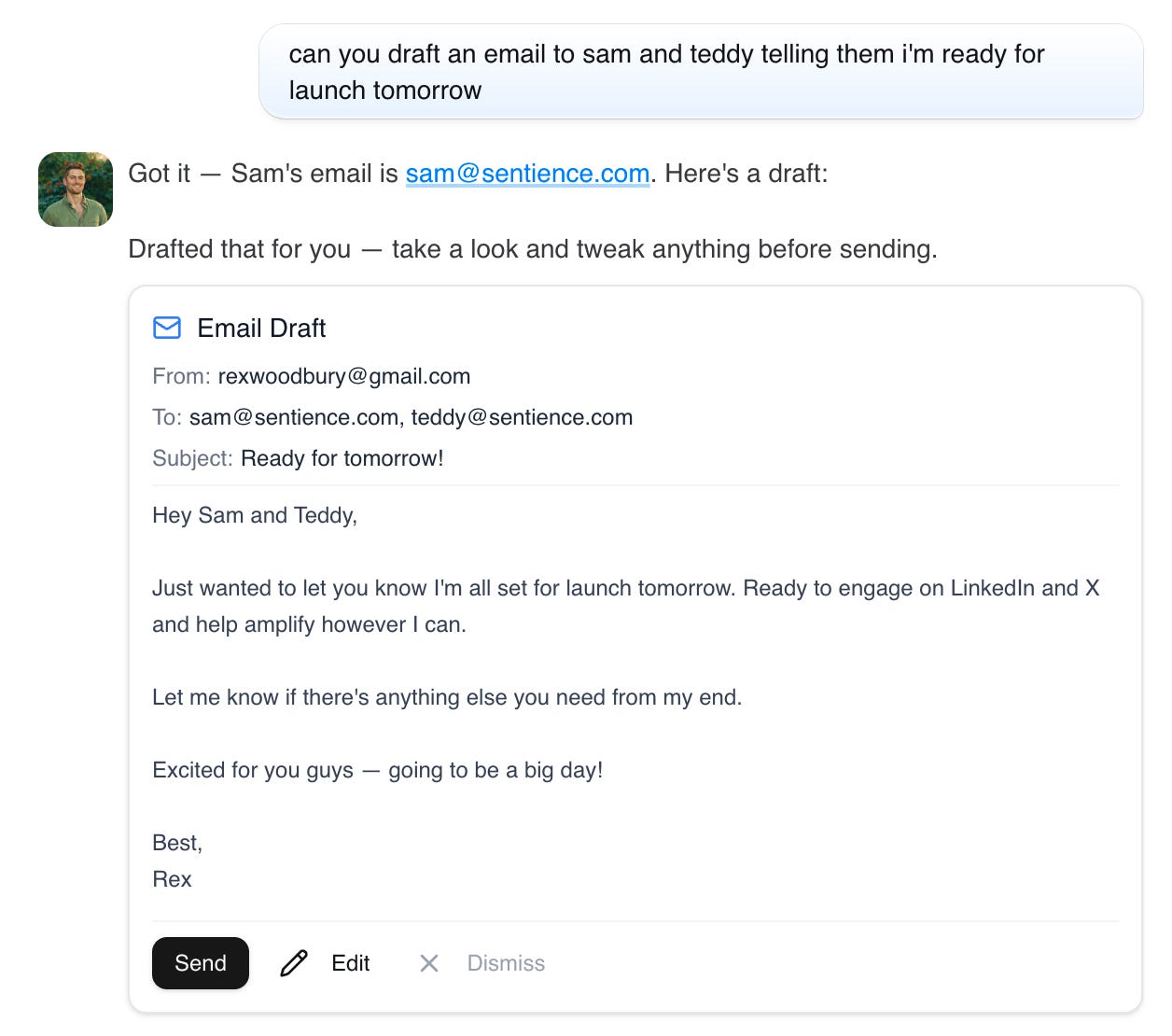

But I can also ask my Sentience to act on my behalf. Spinning up a response to Sam and Teddy takes two seconds:

I’m not a big “Best” guy in email sign-offs, so you can see some kinks to work out here—but my Sentience tends to learn and improve over time, meaning “Best” has its days numbered. This is a simple example of Sentience working on my behalf, but this use case is extensive. Another early beta user is a teacher, who uses Sentience to write her lesson plans on her behalf.

A final example use case: Sentience is effective for information retrieval from others accessing my Sentience. Example questions that people can ask my Sentience:

My brother might ask me what time my flight arrives for my visit.

Jared, my partner at Daybreak, might ask me to remind him what revenue target I mentioned last week for one of our portfolio companies.

A Daybreak founder might ask me if I have any good benchmarks on engineer compensation.

All of these could flow through me, of course. But I might be tied up in a meeting, on a flight without Wi-Fi, or asleep. Quick requests are easy for my Sentience to handle. Another important note: clearly privacy is paramount here. I don’t want my colleagues or LPs to access my personal texts any more than I want my family to access portfolio company information. There are (lots of) safeguards in place, and you can closely control who accesses what information.

Memory and Context

Two words that I think about when I think about Sentience: memory and context.

It’s important to note that Sentience isn’t an app. Rather than replacing existing software, Sentience exists above it as a memory and identity layer. The word “memory” is key here. One framework for thinking through what applications will emerge from AI (courtesy of Alfred Lin) is to think about improvements in new models and how those improvements create new startup opportunities.

If you think back to mobile, there were big businesses built around distinct features of the iPhone:

Camera: Instagram

GPS: Uber

Gyroscope: Strava

Push Notifications: Duolingo

Touchscreen: Tinder (swipe)

These features created new capabilities that created the “why nows” for massive new technology companies.

You can repeat the exercise for AI.

A key breakthrough in recent models is memory. Prediction #16 in our 26 Predictions for 2026 was: “AI finally gets its memory—and this explodes open use cases in both consumer and enterprise.” Sentience is a great example. Memory is integral to Sentience’s functionality, and the product essentially becomes the memory layer traversing your devices and applications. I can access Sentience on my laptop and on my phone; I can use the Sentience app or message Sentience directly through iMessage or Slack.

Switching gears to context:

In December Jaya Gupta wrote an excellent piece on context graphs, defining a context graph as a “living record of decision traces stitched across entities and time so precedent becomes searchable” Over time, Gupta argues, “that context graph becomes the real source of truth for autonomy—because it explains not just what happened, but why it was allowed to happen.”

Sentience is really, really good at context. We shift from just the end result to the why behind it. Animesh Koratana wrote a good follow-up to context graphs, and I like this excerpt:

Every organization pays a fragmentation tax: the cost of manually stitching together context that was never captured in the first place. Different functions use different tools, each with its own partial view of the same underlying reality. A context graph is infrastructure to stop paying that tax…

Your CRM stores the final deal value, not the negotiation. Your ticket system stores “resolved,” not the reasoning. Your codebase stores current state, not the two architectural debates that produced it. We’ve built trillion-dollar infrastructure for what’s true now. Almost nothing for why it became true.

The config file says `timeout=30s`. It used to say `timeout=5s`. Someone tripled it. Why? The git blame shows who. The reasoning is gone.

Clearly, Sentience helps close the reasoning gap. Instead of wondering why the config file is now timeout=5s, you can query the engineer’s Sentience.

We’ve been living in a world of siloed data, fragmented knowledge, and woefully lacking memory or context. The technology is finally ready to underpin solutions to these problems, creating the opening for a large business to be born.

Steam, Steel, and Sentience

Sentience will be a spicy 🌶️ product that has its fair share of critics. It’s a big idea, and a controversial one. Most people think Silicon Valley already has too much data on us; this kind of product may be a bridge too far. Science fiction hasn’t done us any favors here. One of my favorite sci-fi shows (and one of the most underrated) is Pantheon on Netflix; I highly recommend it. I won’t spoil anything but the basic premise is: a tech corporation figures out how to create Uploaded Intelligence (UI) by scanning a person’s brain, then uploading their consciousness to the cloud.

Naturally, the corporation abuses UI. As an example: they upload a world-class hedge fund trader’s mind, then “imprison” her consciousness to make highly-profitable trades for eternity. The series tells the story of the various ethical and philosophical repercussions surrounding UI.

There are parallels to Sentience. No one’s brain is destroyed in the creation of Sentience (that’s how UIs are made in the show) but there are real concerns around privacy, safety, and maybe even human rights. We’re living in a sci-fi world these days, and there will be many complex moral questions to parse. Thankfully Sam and his team are building Sentience in a deeply thoughtful, safe, and intentional way. I’d also note that another dystopian future is one in which AI has no individuality, but instead asymptotes toward a single, one-size-fits-all voice.

Let’s end on a more optimistic note:

I was thinking about Sentience when reading Ivan Zhao’s essay Steam, Steel, and Digital Minds. Ivan begins by musing:

Every era is shaped by its miracle material. Steel forged the Gilded Age. Semiconductors switched on the Digital Age. Now AI has arrived as infinite minds. If history teaches us anything, those who master the material define the era.

Ivan goes on to note how difficult it is to predict the future, because we only know the past. Marshall McLuhan called this “driving to the future via the rearview mirror.” Ivan notes that ChatGPT looks a helluva lot like Google:

Not every application of AI will look so familiar. Models are improving rapidly, and tools are seeping into every facet of work and life. Claude is no longer just an app I use; it can take over my computer through Claude Code / Cowork! And Sentience isn’t an app I use; it’s a memory and context layer across all my devices and applications.

Ivan notes the distinction between Jobs’s “bicycles for the mind” and another popular phrase, “the information superhighway.” In his framing, with humans powering knowledge work we’ve been pedaling bicycles on the autobahn. It’s time for automobiles.

Sam from Sentience would argue that we’re now going 100 mph on the autobahn, but racing toward a dystopian future where one-size-fits-all AI erases human texture and uniqueness. One of my favorite New Yorker writers, Kyle Chayka, wrote a piece last week called Why Tech Bros Are Now Obsessed with Taste. Which is hilarious because we love to say at Daybreak that “taste is the last scarce resource.” 🙃🤦🏼♂️ We’ll come up with a new word. But there’s something important about taste, about preserving it. Some people would argue AI is the exact opposite of how we should do that, but I disagree; we’re living in an AI age now, and we have to figure out how to encode taste.

Sure, the idea of Sentience is a bit alarming: AI that knows everything about you, let loose? But the reframing of that is that we can build models that better reflect human differences, personality, creativity, taste. Your Sentience is different than my Sentience. And you and I still exist; we will just now have bicycles Bugattis at our fingertips that let our unique minds move further and faster.

Thanks for reading! Subscribe here to receive Digital Native in your inbox each week: