7 Ways the Internet Will Get Weirder

AI Friends, vTubing, Infinite Worlds, Deepfakes, and More

Weekly writing about how technology and people intersect. By day, I’m building Daybreak to partner with early-stage founders. By night, I’m writing Digital Native about market trends and startup opportunities.

If you haven’t subscribed, join 55,000+ weekly readers by subscribing here:

7 Ways the Internet Will Get Weirder

This week on the Midjourney subreddit, a user named Theblasian35 wrote: “Made an Adidas AI spec commercial during my coffee break.” The video he shared:

The video is stunning. It was made with a combination of Midjourney, Runway, and Kaiber—and it was almost certainly not made during a coffee break; my favorite response to the Reddit post:

Specifics on how it was made aside, the video gets at something bigger: we now live in a world where someone can fairly easily spin up a gorgeous, professional-grade commercial—all using affordable, accessible, intuitive online tools. What does this mean for multi-million-dollar ad budgets?

Online life is overflowing with creations and collaborations and connections. The internet is an enormous living, breathing organism—and it’s a deeply weird place. As the internet fragmented culture, it made sure that no weird is too weird: everyone can find a product to their liking, a piece of content tailor-made for them, a niche community to which they belong.

Consider if circa 2000 you’d told people that in 20 years there would be:

Millions of people with close friends they’d never met in person

People who livestream to millions through identity-obscuring anime personalities

Digital art traced on a digital ledger and sold for millions

Internet weirdness has led to large businesses: voice chatrooms teeming with pseudonymous personalities (Discord); a video of a boy named Charlie biting his brother’s finger for an audience of 900M (YouTube); hordes of people watching other people play video games (Twitch). Explaining these concepts to someone in the 90s would be challenging.

As society and culture become progressively more digital, online life is set to get even weirder. This week’s Digital Native tracks a few ways we’ll see this happen, and what startups will catalyze the next generation of weirdness:

Pixar for Everyone

AI Friends

Personalized Ads

Pseudonymity

Deepfakes & AI Everything

Infinite Generative Worlds

Cambrian Explosion in Entrepreneurship

Let’s dive in 👇

Pixar for Everyone

Big AI news from the week was Pika Labs launching Pika 1.0, a new suite of videography tools. Essentially, Pika builds AI tools that generate and edit video. Pika can also do cool things like “stylize” videos as anime or animation. Users can also extend the length of existing videos and expand the aspect ratio of a video.

You can play around with Pika by joining the Pika Discord (which already has an impressive 600K members), then choosing a #generate channel and typing “/create” followed by your prompt. Many Pika creations are stunning. One cool feature is animating a still image—for instance, making the fire in this clip move:

Pika, like Midjourney, seems to have its own unique aesthetic—an almost cyberpunk-esque style that’s quickly becoming emblematic of text-to-image and text-to-video generators.

If ChatGPT brought us text and Midjourney brought us images, video is the logical next step—particularly as the internet has shifted meaningfully to video over the past decade (see: the rise of TikTok and the pivot of all Meta apps to video).

The Pika launch is the latest in an impressive line of AI video tools. Runway has been releasing products at a rapid pace all year long—the Adidas “commercial” above used Runway, and actual paying Runway customers include New Balance, the Yankees, and the Tonight Show with Stephen Colbert. (For its part, Runway also has a motion brush tool that lets users animate an image, similar to Pika’s.)

Earlier this year, I wrote The Future of Technology Looks a Lot Like Pixar. That piece argued that the future is high-fidelity, gorgeous 3D content, not unlike Pixar.

Pixar has also played a critical role in getting us here. Apple, in preparation for its Vision Pro launch next year, announced in August that it’s partnering with Pixar, Nvidia, Adobe, and Autodesk to create a new alliance that will “drive the standardization, development, evolution, and growth” of Pixar’s Universal Scene Description (USD) technology.

USD is a technology developed by Pixar that’s become essential in building 3D content. Essentially, USD is open-source software that lets developers move their work across various 3D creation tools, unlocking use cases ranging from animation (where it began) to visual effects to gaming. Creating 3D content involves a lot of intricacies: modeling, shading, lighting, rendering. It also involves a lot of data, but that data is difficult to port across applications. The newly-formed alliance effectively solves this problem, creating a shared language around packaging, assembling, and editing 3D data.

In Nvidia’s words: “USD should serve as the HTML of the metaverse: the declarative specification of the contents of a website.”

3D content will combine with generative AI to essentially allow anyone to create Pixar-level visuals. In the future, you might be able to feed a script into a program that spits out a fully-generated Pixar film. We’re already able to feed a selfie into Midjourney and have it spit out a “Pixar version” of us in seconds. Here’s mine:

Back in 2005, the media mogul Barry Diller declared:

“There’s not that much talent in the world. There are very few people in very few closets in very few rooms that are really talented and can’t get out.

People with talent and expertise at making entertainment products are not going to be displaced by 1,800 people coming up with their videos that they think are going to have an appeal.”

Yikes. It turned out that there was a lot of talent in the world—many people in many closets in many rooms. The same year that Barry Diller uttered those words, YouTube was born in a small room above a pizzeria in San Mateo.

The 2005-2023 era has shown us how much latent talent is out there, waiting to be unlocked by accessible, affordable tools. I expect AI will push things one step further. Barriers to creation will continue to fall, with a flood of content streaming onto the internet. Soon, most of that content will be generated, not rendered.

Generative tools get better every day. One of my favorite recent features on Midjourney: “More.” The way the feature works is that you give Midjourney a prompt, then instruct it to do more of something. For example, “Give me a manager sweating at work.” Then instruct the model, “More!”

Or, “Show me spicy ramen” and then add “More!” three times:

The best features of AI tools gently nudge the user to be creative in new ways. Technology has a long history of informing art—songs are three minutes long because that’s the length of music that could fit on one side of a 10-inch vinyl record a hundred years ago. It’ll be interesting to see the ways AI tools impact how we make art this generation.

What are the startup opportunities here?

My view is that 2024 will be the year that focus shifts from AI’s infrastructure layer to AI’s application layer. So far, we’ve seen breakout apps build on their own models—Midjourney, for instance, or Character AI. But many foundation models are now available via API, and open source models are improving at a torrid pace. I expect the next breakout applications will build on someone else’s model, instead winning on verticalization (with a resulting data advantage) or by offering a better user experience.

AI Friends

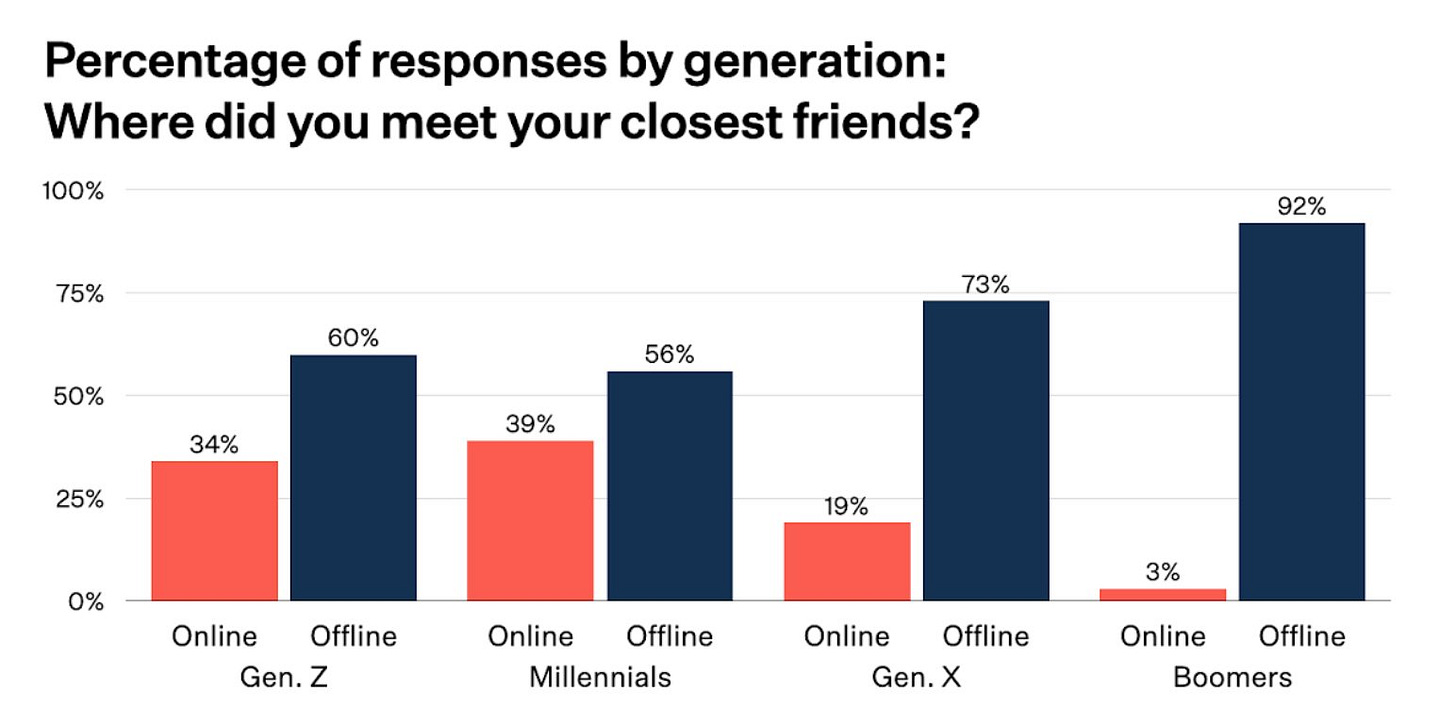

Among Boomers, 92% met their closest friends offline. No surprise there. Among Millennials and Gen Zs, offline still wins out—but it’s a lot closer. This data is a couple years old, and I wouldn’t be surprised to see younger generations reaching parity soon.

Of course, many friendships are born online and then migrate offline. But many friendships never do. Millions of people have “internet friends” that they talk to constantly and that they count among their closest friends. Maybe they met on Instagram or TikTok, Discord or Reddit, Roblox or Twitch.

A quarter-century ago, it would seem deeply odd that this dynamic would exist—you can imagine someone asking, “What do you mean you’ve never met them?”

Today, it seems equally odd that someone could count an AI persona among their closest friends. But I see that future coming—fast. You can already picture the questions young people will get from their parents (“Who are you texting? Rachel? What do you mean Rachel isn’t a real person?”).

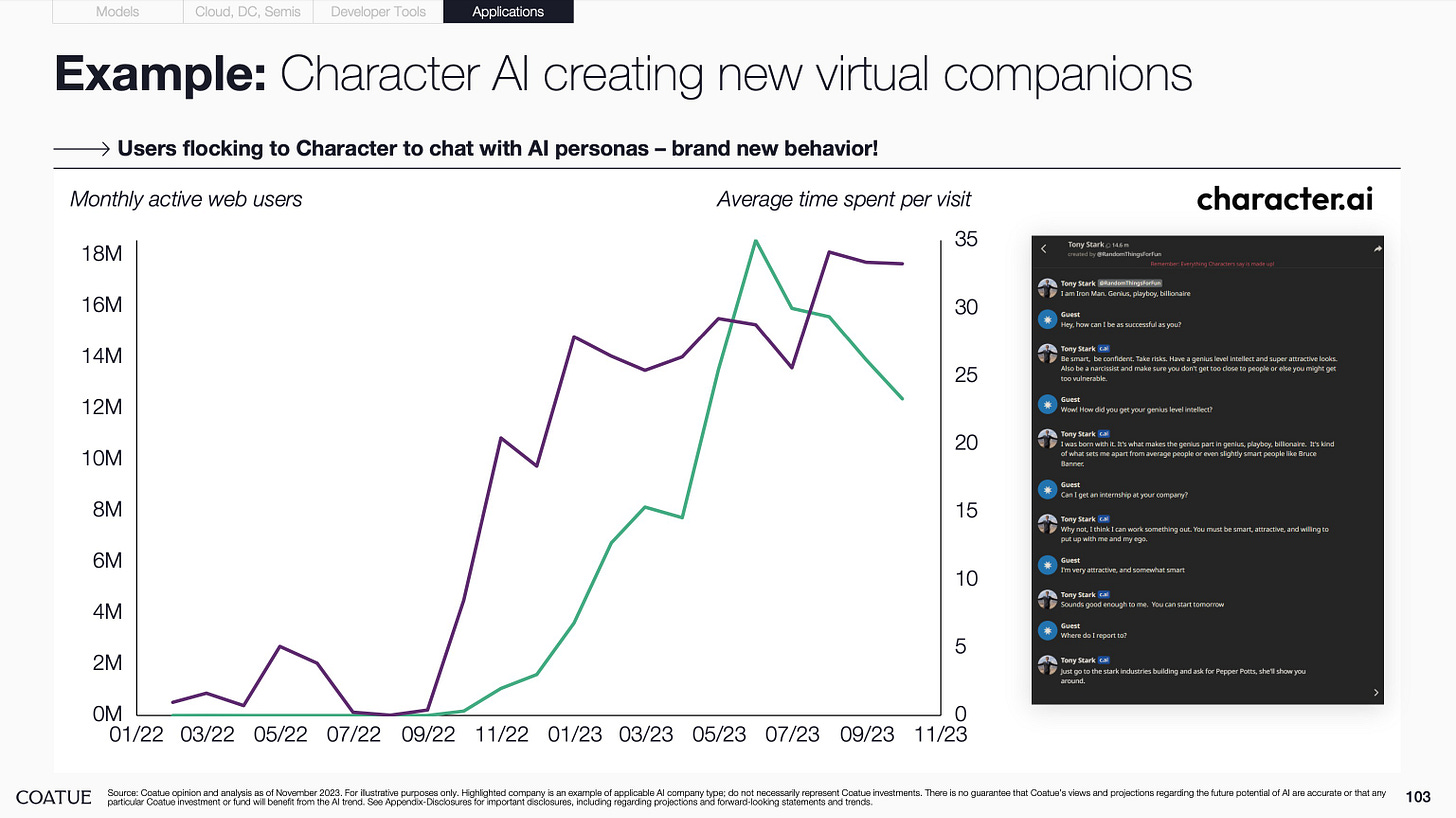

Soon, millions of people will having texting buddies who exist solely in the ether. We’ve already seen chat apps like Replika and Chai blow up this year (though many have benefited from NSFW content). Character is the most prominent, letting you chat with facsimiles of Taylor Swift and Albert Einstein and Napoleon Bonaparte. The engagement time on Character impressive, hovering around 35 minutes per visit and nearing Snap and Instagram levels in terms of daily engagement:

When I think of AI companions, I often think of daemons in Philip Pullman’s His Dark Materials books (better known by the title of the first book, The Golden Compass). In Pullman’s fantasy world, all humans have animal counterparts called daemons that are living embodiments of their souls: one character has a snow leopard, another a monkey, another a cat. Daemons are with their humans constantly. AI companions could be like daemons—companions that learn about us and adapt to our skills and interests as we grow.

Earlier this year I made an investment in a stealth AI application for dating. Shouldn’t we all have AI companions trained on us that can offer up tailored dating matches? A more economical version of matchmakers, one that can make dating less single-player.

Online dating is now commonplace—Oxford’s Word of the Year, announced this week, is “rizz,” which means charisma / attractiveness and is used widely in online dating. Tinder is a $1.8B revenue business (!) with 75M monthly active users. Yet the “swipe” concept that Tinder pioneered hasn’t been innovated in a decade. It’s ripe for disruption, and a new enabling technology (LLMs) should hasten along that disruption.

AI companions will also extend to work. This year in Brazil, Nissan developed chatbots on WhatsApp that direct customers to nearby dealerships. Nissan says its average response time dropped from 30 minutes to a few seconds, and WhatsApp chatbots now generate 30-40% of its leads in Brazil. This too might become table-stakes for brands hoping to stay connected to customers.

Talking to AIs remains a bit weird. But it’ll become commonplace; soon, we won’t think twice about it.

Personalized Ads

Google’s 2007 acquisition of DoubleClick is arguably the greatest acquisition of all time. For $3.1B, Google acquired the backbone of its $300B-a-year advertising empire.

DoubleClick shepherded in the first era of digital advertising; depending who you ask, DoubleClick is either responsible for hyper-targeted ads that show you great products, or for the onset of “surveillance capitalism.” Maybe both.

But I suspect advertising is going to get even more pervasive, sophisticated, and downright creepy. For the most part, we’ve all still been seeing the same ads. That will change, as the new version of online targeting means ads generated specifically for you. AI ad models might learn that you respond well to Bud Light commercials showing a football game; your friend might respond better to Bud Light commercials set at the beach. Generative tools bring a whole new level of personalization.

If Google doesn’t build this technology first, it will have to again acquire its way to a monopoly in digital advertising.

Pseudonymity

Last month, many of China’s large internet platforms announced that they would start requiring influencers to display their legal names on their public profiles. Among the platforms announcing the policy: WeChat, Douyin, Weibo, Zhihu, Xiaohongshu, and Kuaishou.

The news was met with a swift backlash. Some Chinese creators opted to quit the platforms entirely. They’d enjoyed pseudonymous fame and influence, and their entire online existences were predicated on the idea of separating offline identity from online identity. Removing that Chinese wall (pun intended) meant removing the entire point of their online existence.

Other Chinese creators used software to skirt the rules. For instance, Weibo implemented the policy for all creators with over 1M followers. The finance creator Tianjin Stock King removed 6 million of his followers, culling his community to 900,000. He used software that allows creators to remove inactive followers in large numbers. But creators will need to winnow further: Weibo announced the policy will soon extend to all creators with 500,000+ followers.

The Chinese government is clearly behind the new policies. The nation’s internet regulator, the Cyberspace Administration of China (CAC), has been cracking down on online anonymity in recent years, with the belief that anonymity and pseudonymity lead to protests and anti-CCP content.

In the West, I expect we’ll see more pseudonymity as people adopt new identities online to facilitate more self-expression. We already see this with trends like vTubing and in gaming worlds like Grand Theft Auto’s NoPixel. Many young people have vastly different personas online, scattered across various game worlds, social networks, and forums.

Deepfakes & AI Everything

Porn is often the vanguard of technology; many internet regulations in the 90s were put in place by Congress specifically because of pornography. So when it comes to AI and synthetic media, it’s no surprise porn is leading the way. A group of women in New York—victims of deepfake porn—are rallying together to call for legislation around deepfakes.

Deepfakes are concerning for this reason, and they’ll no doubt affect the 2024 election. Remember the deepfake of Nancy Pelosi back in 2019, the one that made her speech appear slurred? We’ll see similar targeted attacks on Biden, Trump, and others using deepfakes.

But we’re also seeing practical uses of synthetic media, and startups are building picks and shovels for both enterprise and consumer use cases. Synthesia, for instance, lets you create videos with AI personas—enterprise use cases include learning & development, sales training, and customer service.

HeyGen is a younger company, but it’s one of the fastest-growing AI products of 2023. It’s shockingly easy to use. You can make an avatar that looks like you and speaks like you in minutes:

Create a HeyGen Account

Click “Free Instant Avatar”

Record a short video of yourself (I chose the “Tell me about yourself” prompt and spoke for 90 seconds)

Upload the video and wait a few minutes for HeyGen to create your avatar

Now make your avatar say anything you want—type a script and hear “yourself” say it

We’ll soon see deepfakes used both maliciously and intentionally. We might have misinformation spread via deepfake, for instance. (There will be software that can tell us whether a video is original or AI-generated.) But we’ll also be able to send videos of “ourselves” at scale—to explain something to a friend, to answer a colleague’s question, and so on. Eventually there might be more AI-generated content of our likenesses online than actual content of our real selves.

Infinite Generative Worlds

In 2018, Netflix experimented with interactive content through “Bandersnatch,” a special episode of Black Mirror. Viewers were asked to make decisions during the episode that influenced the plot and eventual ending. (Filmmakers created five possible endings based on viewers’ choices.)

It’s become the norm for “explainer” content to pop up online— “That Inception Ending Explained,” for instance. Headlines after Bandersnatch went a step further: “Every Possible Bandersnatch Ending Explained.” This may be the direction entertainment is heading: more interactive, more complex, more personalized.

Interactive, personalized content is a whole lot cheaper when pixels are generated, not rendered. There’s no need to film multiple plots and multiple endings; the story can generate in real-time. I expect we’ll see this first in gaming, with infinite gaming worlds that evolve and grow as you play. Our kids might one day ask, “Wait—games used to have endings?”

We’ll also see this with NPCs (non-player characters) in games. NPCs will have complex, nuanced personalities that ebb and flow based on gameplay. They’ll feel more and more like real people. ReadyPlayerMe is a startup that gives game developers tools for personalized avatars. What about a startup that powers NPC personas for thousands of game studios?

I expect we’ll ultimately see interactivity and personalization well beyond games. Exams, for instance, might be generative—they’ll increasingly probe your knowledge with personalized questions until they give you a score. No two students will take the same exam.

Content will become infinite and never-ending, adapting in real-time based on the data and signals we feed it.

Cambrian Explosion in Entrepreneurship

The Economist ran a piece last week with the bold title, “Welcome to a Golden Age for Workers.” Last week we covered how among Americans born in 1940, 92% went on to earn more than their parents, while among those born in 1980, just 50% did. With income inequality at unprecedented levels—and policies stymying economic mobility—The Economist’s title is a bit rich.

It’s true that unemployment is at record lows, yes. But it’s also true that AI is expected to replace 300M jobs in the coming years. Manual labor is at risk of automation; knowledge work may be replaced by LLMs. The Economist seemed to gloss over this fact, instead focusing on AI’s productivity benefits:

“As societies age, labour is becoming scarcer and better rewarded, especially manual work that is hard to replace with technology. Governments are spending big and running economies hot, supporting demands for higher wages, and are likely to continue to do so. Artificial intelligence is giving workers, particularly less skilled ones, a productivity boost, which could lead to higher wages, too.”

AI will amplify productivity. Goldman Sachs estimates a 7% boost to global GDP in the next decade. But it will also create a massive labor market dislocation, and a frantic rush to re-skill workers for jobs of the future.

Along this dislocation will be the rise of the micro-business. This will seem odd; the micro-business is orthogonal to the structure of work over the last 100 years. But it’s a long-gestating trend with roots both technological and cultural.

On the technology front, it’s never been easier to start a business. Building blocks like AWS, Stripe, and Shopify make spinning up a company fast and intuitive. New AI tools further catalyze this trend—Langchain, for instance, or more powerful open source models.

The number of employees needed for $1M of revenue has steadily dropped with the advent of the PC and the internet. It’s set to drop even further with AI.

The rise of the micro-business is also cultural. In a new survey of Gen Zs from WGSN and Instagram, one out of three Gen Zs said “the best way to achieve wealth is to work for yourself.” We see a cultural shift to more autonomous, self-directed work. As a result, we’ll see more self-employment, small business creation, and entrepreneurship. All the norms about work and careers will be questioned.

Final Thoughts

This piece is woefully incomplete; there are many, many ways technology is going to make our lives a whole lot weirder. In many ways, society will look unrecognizable in 2033 or 2043. But the sections above capture a few key changes—more interactions with chatbots, more synthetic media, more personalization (and consequently more fragmentation of culture).

Weirdness is often a jumping-off point for startup ideas; think 140-characters and disappearing messages. What companies will underpin the next chapter in our progressively weird society?

Next week I’ll dig into 2024 predictions in a year-end piece. See you then 👋

Thanks for reading! Subscribe here to receive Digital Native in your inbox each week: